Android Things allows you to make amazing IoT devices with simple code. In this post, I’ll show you how to put the pieces together to build a more complex project!

This won’t be a complete top-to-bottom tutorial. I’ll leave you lots of room to expand and customize your device and app—so you can explore and learn further on your own. My goal is to have fun while working with this new development platform, and show you that there’s more to Android Things than just blinking LEDs.

What Are We Building?

Half the fun of an Internet of Things project is coming up with the “thing”. For this article, I’ll build a cloud-connected doorbell, which will take a picture when someone approaches, upload that image to Firebase, and trigger an action. Our project will require a few components before we can start:

- Raspberry Pi 3B with Android Things on a SIM card

- Raspberry Pi camera

- Motion detector (component: HCSR501)

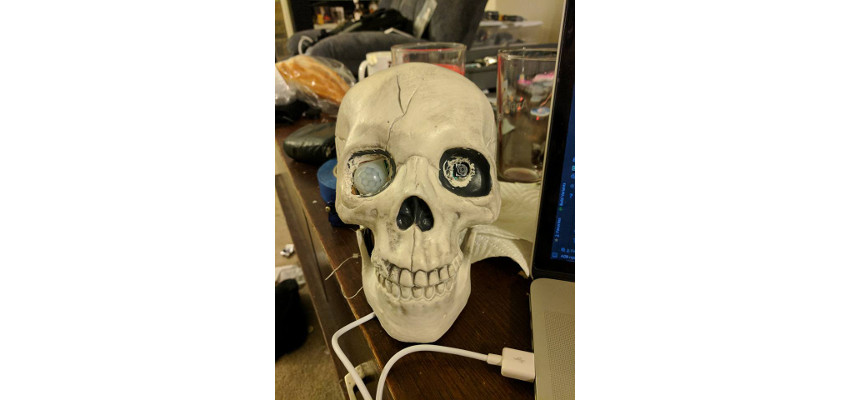

In addition, you can customize your project to fit your own creative style and have some fun with it. For my project, I took a skeleton decoration that had been sitting on my porch since Halloween and used that as a casing for my project—with the eyes drilled out to hold the camera and motion detector.

.jpg)

I also added a servomotor to move the jaw, which is held closed with a piece of elastic, and a USB speaker to support text-to-speech capabilities.

You can start this project by building your circuit. Be sure to note what pin you use for your motion detector and how you connect any additional peripherals—for example, the connection of the camera module to the camera slot on your Raspberry Pi. With some customization, everyone’s end product will be a little different, and you can share your own finished IoT project in the comments section for this article. For information on hooking up a circuit, see my tutorial on creating your first project.

Android SDKAndroid Things: Your First Project

Android SDKAndroid Things: Your First Project

Detecting Motion

There are two major components that we will use for this project: the camera and the motion detector. We’ll start by looking at the motion detector. This will require a new class that handles reading digital signals from our GPIO pin. When motion is detected, a callback will be triggered that we can listen for on our MainActivity. For more information on GPIO, see my article on Android Things peripherals.

private GpioCallback mInterruptCallback = new GpioCallback() {

@Override

public boolean onGpioEdge(Gpio gpio) {

try {

if( gpio.getValue() != mLastState ) {

mLastState = gpio.getValue();

performMotionEvent(mLastState ? State.STATE_HIGH : State.STATE_LOW);

}

} catch( IOException e ) {

}

return true;

}

};

If you have been following along with the Android Things series on Envato Tuts+, you may want to try writing the complete motion detector class on your own, as it is a simple digital input component. If you’d rather skip ahead, you can find the entire component written in the project for this tutorial.

In your Activity you can instantiate your HCSR501 component and associate a new HCSR501.OnMotionDetectedEventListener with it.

private void initMotionDetection() {

try {

mMotionSensor = new HCSR501(BoardDefaults.getMotionDetectorPin());

mMotionSensor.setOnMotionDetectedEventListener(this);

} catch (IOException e) {

}

}

@Override

public void onMotionDetectedEvent(HCSR501.State state) {

if (state == HCSR501.State.STATE_HIGH) {

performCustomActions();

}

}

Once your motion detector is working, it’s time to take a picture with the Raspberry Pi camera.

Taking a Picture

One of the best ways to learn a new tool or platform quickly is to go through the sample code provided by the creators. In this case, we will use a class created by Google for taking a picture using the Camera2 API. If you want to learn more about the Camera2 API, you can check out our complete video course here at Envato Tuts+.

Android SDKTake Pictures With Your Android App

Android SDKTake Pictures With Your Android App

You can find all of the source code for the camera class in this project’s sample, though the main method that you will be interested in is takePicture(). This method will take an image and return it to a callback in your application.

public void takePicture() {

if (mCameraDevice == null) {

return;

}

try {

mCameraDevice.createCaptureSession(

Collections.singletonList(mImageReader.getSurface()),

mSessionCallback,

null);

} catch (CameraAccessException cae) {}

}

Once this class has been added to your project, you will need to add the ImageReader.OnImageAvailableListener interface to your Activity, initialize the camera from onCreate(), and listen for any returned results. When your results are returned in onImageAvailable(), you will need to convert them to a byte array for uploading to Firebase.

private void initCamera() {

mCameraBackgroundThread = new HandlerThread("CameraInputThread");

mCameraBackgroundThread.start();

mCameraBackgroundHandler = new Handler(mCameraBackgroundThread.getLooper());

mCamera = DoorbellCamera.getInstance();

mCamera.initializeCamera(this, mCameraBackgroundHandler, this);

}

@Override

public void onImageAvailable(ImageReader imageReader) {

Image image = imageReader.acquireLatestImage();

ByteBuffer imageBuf = image.getPlanes()[0].getBuffer();

final byte[] imageBytes = new byte[imageBuf.remaining()];

imageBuf.get(imageBytes);

image.close();

onPictureTaken(imageBytes);

}

Uploading a Picture

Now that you have your image data, it’s time to upload it to Firebase. While I won’t go into detail on setting up Firebase for your app, you can follow along with this tutorial to get up and running. We will be using Firebase Storage to store our images, though once your app is set up for using Firebase, you can do additional tasks such as storing data in the Firebase database for use with a companion app that notifies you when someone is at your door. Let’s update the onPictureTaken() method to upload our image.

private void onPictureTaken(byte[] imageBytes) {

if (imageBytes != null) {

FirebaseStorage storage = FirebaseStorage.getInstance();

StorageReference storageReference = storage.getReferenceFromUrl(FIREBASE_URL).child(System.currentTimeMillis() + ".png");

UploadTask uploadTask = storageReference.putBytes(imageBytes);

uploadTask.addOnFailureListener(new OnFailureListener() {

@Override

public void onFailure(@NonNull Exception exception) {

}

}).addOnSuccessListener(new OnSuccessListener() {

@Override

public void onSuccess(UploadTask.TaskSnapshot taskSnapshot) {

}

});

}

}

Once your images are uploaded, you should be able to see them in Firebase Storage.

Customize

Now that you have what you need to build the base functionality for your doorbell, it’s time to really make this project yours. Earlier I mentioned that I did some customizing by using a skeleton with a moving jaw and text-to-speech capabilities. Servos can be implemented by importing the servo library from Google and including the following code in your MainActivity to set up and run the servo.

private final int MAX_MOUTH_MOVEMENT = 6;

int mouthCounter = MAX_MOUTH_MOVEMENT;

private Runnable mMoveServoRunnable = new Runnable() {

private static final long DELAY_MS = 1000L; // 5 seconds

private double mAngle = Float.NEGATIVE_INFINITY;

@Override

public void run() {

if (mServo == null || mouthCounter <= 0) {

return;

}

try {

if (mAngle <= mServo.getMinimumAngle()) {

mAngle = mServo.getMaximumAngle();

} else {

mAngle = mServo.getMinimumAngle();

}

mServo.setAngle(mAngle);

mouthCounter--;

mServoHandler.postDelayed(this, DELAY_MS);

} catch (IOException e) {

}

}

};

private void initServo() {

try {

mServo = new Servo(BoardDefaults.getServoPwmPin());

mServo.setAngleRange(0f, 180f);

mServo.setEnabled(true);

} catch (IOException e) {

Log.e("Camera App", e.getMessage());

return; // don't init handler. Stuff broke.

}

}

private void moveMouth() {

if (mServoHandler != null) {

mServoHandler.removeCallbacks(mMoveServoRunnable);

}

mouthCounter = MAX_MOUTH_MOVEMENT;

mServoHandler = new Handler();

mServoHandler.post(mMoveServoRunnable);

}

When you are done with your app, you will also need to dereference the servo motor.

if (mServoHandler != null) {

mServoHandler.removeCallbacks(mMoveServoRunnable);

}

if (mServo != null) {

try {

mServo.close();

} catch (IOException e) {

} finally {

mServo = null;

}

}

Surprisingly, text to speech is a little more straightforward. You just need to initialize the text-to-speech engine, like so:

private void initTextToSpeech() {

textToSpeech = new TextToSpeech(this, new TextToSpeech.OnInitListener() {

@Override

public void onInit(int status) {

if (status == TextToSpeech.SUCCESS) {

textToSpeech.setLanguage(Locale.UK);

textToSpeech.setOnUtteranceProgressListener(utteranceListener);

textToSpeech.setPitch(0.3f);

} else {

textToSpeech = null;

}

}

});

}

You can play with the settings to make the voice fit your application. In the sample above, I have set the voice to have a low, somewhat robotic pitch and an English accent. When you are ready to have your device say something, you can call speak() on the text-to-speech engine.

textToSpeech.speak("Thanks for stopping by!", TextToSpeech.QUEUE_ADD, null, "skeletontts");

On a Raspberry Pi, if you are using a servo motor, you will need to ensure that your speaker is connected over a USB port, as the analog aux connection cannot be used while a PWM signal is also being created by your device.

I highly recommend looking over Google's sample drivers to see what additional hardware you could add to your project, and get creative with your project. Most features that are available for Android are also supported in Android Things, including support for Google Play Services and TensorFlow machine learning. For a little inspiration, here's a video of the completed project:

Conclusion

In this article I've introduced a few new tools that you can use for building more complex IoT apps.

This is the end of our series on Android Things, so I hope you've learned a lot about the platform, and use it to build some amazing projects. Creating apps is one thing, but being able to affect the world around you with your apps is even more exciting. Be creative, create wonderful things, and above all else, have fun!

Remember that Envato Tuts+ is filled with information on Android development, and you can find lots of inspiration here for your next app or IoT project.

-

Android Things and Machine Learning

-

How to Use Google Cloud Machine Learning Services for Android

-

How to Make Calls and Use SMS in Android Apps

-

Android Sensors in Depth: Proximity and Gyroscope

-

Get Started With Android VR and Google Cardboard: Panoramic Images

-

Create a Voice-Controlled Android App